AI and Cybercrime: The Hidden Dangers of Cutting-Edge Tech

AI and Cybercrime: The Hidden Dangers of Cutting-Edge Tech

The swift progress of generative AI has opened up incredible possibilities, but it’s also cranked up the heat on cyber threats. OpenAI’s latest report, “Disrupting Malicious Uses of AI,” sheds light on how bad actors are weaving AI into their attack strategies, making them bigger, smarter, and sneakier than ever. Let’s dive into the report’s findings, explore the shifting threat landscape, and share some tips on how to protect yourself from AI-powered cybercrime.

Key Takeaways from the Report

OpenAI’s analysis uncovers some crucial insights:

AI: The Ultimate Efficiency Booster

Cybercriminals are using AI to supercharge their operations, creating content, translating languages, and managing social media messages at lightning speed. The real game-changer here is the pace and adaptability of these attacks, not necessarily the invention of brand-new methods.

The Perfect Blend: Human and AI Teamwork

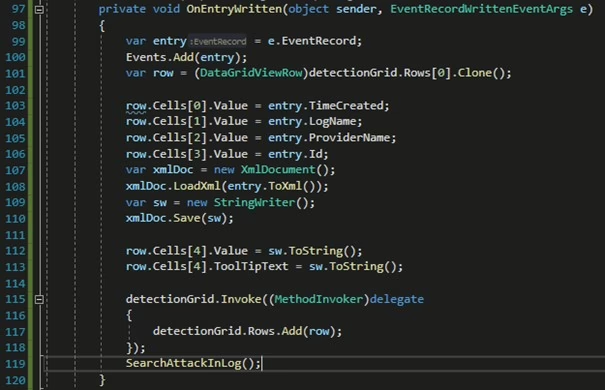

Many cyber schemes these days mix AI with human expertise. For example, hackers might ask for small code snippets to slip past AI security measures, then piece them together to build powerful malware.

State-Sponsored Actors and AI

OpenAI has spotted and shut down accounts linked to state-backed groups using AI for spying, scripting, and spreading false information. While AI use is mostly just a step up from traditional methods, these findings show how nation-state threats are adapting to an AI-driven world.

Scam Networks and Job Fraud

Scam centers are turning to AI to create fake executive bios and craft messages in multiple languages. Interestingly, OpenAI reckons that ChatGPT is used more often to catch scams than to create them.

The Dual-Use Dilemma

Many shady activities walk the fine line between legal and illegal use, making it tough for AI vendors to build proper safeguards.

What’s New (and What’s Not)

The fusion of AI and cyber threats is shaking things up in a few key areas:

Social Engineering on Steroids

AI enables faster, more localized, and context-aware phishing campaigns. Tools like FraudGPT and WormGPT are ramping up threat actor capabilities, although old-school methods still rule the roost.

Deepfakes and Impersonation

The ability to clone voices and generate deepfake videos poses serious risks for social engineering and fraud. OpenAI’s Sora video tool has come under fire for its potential misuse.

Lowering the Barrier to Entry

AI allows even low-skill attackers to pull off sophisticated attacks, making cyber threats more accessible and raising risks across the board.

Increasing Stealth and Evasion

Attackers are getting better at covering their AI tracks, making detection more challenging than ever.

However, some things stay the same:

Fundamental Attack Categories

Core attack methods like phishing, credential theft, and malware remain largely unchanged. AI is amplifying these threats rather than transforming them.

Barriers to Adoption

Integrating AI into fully autonomous hacking operations is complex, slowing hacker adoption due to challenges in model hosting, detection avoidance, and integration.

Defensive Advantage

AI can also empower defenders through automated detection, anomaly monitoring, and threat intelligence. OpenAI’s approach to detecting and shutting down adversarial accounts is a prime example.

Emerging Risks and Attack Patterns

Several evolving threat patterns deserve our attention:

- Prompt Injection/Jailbreak: Attackers manipulate LLM inputs to bypass safeguards, potentially leaking internal secrets or executing harmful code.

- Smishing Campaigns: Using generative models to craft personalized SMS phishing content at scale, leading to potential account takeovers.

- Vibe-Hacking/Agentic AI Abuse: Fully agentic AI systems executing end-to-end operations, including psychological tactics and adaptive workflows, pose significant emerging threats.

For more information, check out the OpenAI report on disrupting malicious uses of AI.