Top 10 AI Threats: Safeguard Your Business Now!

Artificial Intelligence (AI) is revolutionizing industries, supercharging productivity, and driving efficiency like never before. But here’s the catch: cybercriminals are also leveraging AI to exploit vulnerabilities on an unprecedented scale. From deepfake scams to hijacked meetings, attackers are using AI in ways that traditional security measures can’t always mitigate. Stay ahead of the curve by understanding these top 10 AI-driven threats that every business should be aware of:

1. Unauthorized AI Meeting Assistants

AI bots are increasingly crashing private meetings, recording or transcribing sensitive conversations without proper authorization. This not only raises privacy red flags but also creates significant compliance and data sovereignty issues. Many free AI-based assistants share data with third parties to “improve services,” leading to potential data monetization.

2. Data Leakage via Public Large Language Models (LLMs)

Employees often paste sensitive data into public LLMs like ChatGPT, inadvertently exposing proprietary or regulated information through model logs or retraining datasets. This emerging “Shadow IT” challenge is a major headache for Chief Information Security Officers (CISOs).

3. AI-Generated Deepfake Social Engineering

Voice and video deepfakes are eroding trust by impersonating executives, politicians, or even family members with alarming realism. These sophisticated scams are becoming increasingly difficult to detect, leading to high-profile fraud cases.

4. AI-Enhanced Spear-Phishing & Business Email Compromise (BEC)

Gone are the days of poorly crafted phishing emails. With AI, criminals can now mine LinkedIn, social media, and corporate websites to create hyper-personalized phishing scams that bypass most defenses and trick even the most vigilant executives.

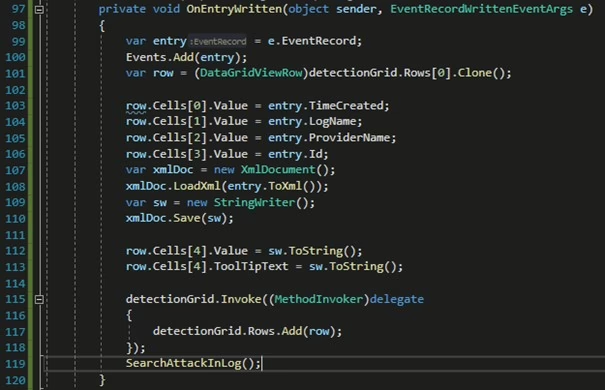

5. Autonomous AI Attack Agents

Tools like WormGPT and FraudGPT act as autonomous cyber mercenaries, capable of writing malware, probing networks, and escalating attacks at machine speed with minimal human oversight. These tools represent a significant leap in the sophistication of cyber threats.

6. Prompt Injection & Model Inversion

Hackers exploit AI weaknesses by manipulating prompts (“jailbreaking” models) or forcing them to reveal hidden training data. These attacks can exfiltrate secrets, bypass safety controls, or weaponize AI outputs, posing a severe risk to data security.

7. Unauthorized AI Scraping

Rogue AI bots scrape internal systems, wikis, and emails to build massive, exploitable datasets. These bots account for a growing percentage of network traffic and can overload infrastructure, leading to significant operational disruptions.

8. Synthetic Identities Testing Defenses

Criminals use “deepfake sentinels” to probe companies’ fraud detection systems. Once the weaknesses are mapped, large-scale fraud campaigns follow, making it crucial for businesses to stay ahead of these evolving threats.

9. Training-Data Leakage

Some LLMs regurgitate private data from their training sets, accidentally exposing intellectual property, personal records, or regulated information. This leakage can lead to severe compliance violations and reputational damage.

10. AI Hallucinations in Critical Domains

AI doesn’t just lie; it hallucinates. In healthcare, finance, or legal contexts, fabricated data or advice can cause operational failures, compliance violations, or reputational damage. This unpredictability makes AI a double-edged sword.

Best Practices Checklist for SMBs & Enterprises

- Policy on LLM Use: Update your Acceptable Use Policy (AUP) to allow only approved AI tools. Block public LLM use with regulated data and train employees on AI threats.

- Secure Meeting Controls: Restrict meeting access, vet AI assistants, and require consent before recordings. Remove unapproved meeting attendees.

- Deepfake & Phishing Awareness: Train employees to spot AI-generated media. Run deepfake phishing simulations and establish executive safewords.

- Zero Trust + EDR/XDR: Detect AI-generated malware with advanced endpoint and browser-level defenses to enhance security.

- AI Input Validation: Sanitize prompts and block injection attempts with OWASP-based filters to prevent data breaches.

- Access & Secrets Management: Protect Retrieval-Augmented Generation (RAG) pipelines and enforce least privilege access to minimize risks.

- Sensitive Content Monitoring: Use watermarking, leak detection, and AI output monitoring to safeguard sensitive information.

- Enhanced Authentication: Deploy hardware or authenticator app Multi-Factor Authentication (MFA), adopt Passkeys, and include anti-deepfake validation in training programs to strengthen security.

- AI Security Testing: Red-team AI systems regularly and test for adversarial prompts and inversion exploits to identify vulnerabilities.

- Governance & Vendor Risk: Audit AI vendors, require compliance assurances, and document AI approvals to ensure accountability and security.

The AI threat landscape is evolving rapidly. While firewalls and antivirus tools are essential, your employees remain the frontline of defense. Equip them to spot and respond to AI-driven attacks with comprehensive training and awareness programs.

For more information on AI threats and how to protect your business, visit CyberHoot’s AI Resource Center.